SCP Core Spec

Version: v1.0 (Draft) Status: Draft Authoritative: Yes

Purpose

This document defines the normative lifecycle and state semantics of SCP.

Canonical protocol terms used here follow SCP Protocol Overview.

It answers:

- how the protocol is structured and what each component does

- how tasks move through the protocol, including classification, composition, and iteration

- how semantic meaning is defined, resolved, and evolved

- how consent, authorization, and privacy budget protect private data

- how multi-Vault coordination and composite tasks extend the basic task model

- how challenge, settlement, and result delivery finalize protocol outcomes

- what a conforming contract boundary must preserve

Part 1: Protocol Architecture

3 Planes + 2 Services

The protocol architecture uses a 3 Planes + 2 Services model.

The three planes represent the sequential task pipeline. Each plane has a clear trust boundary and handoff contract with the next. The two services are cross-cutting: they are accessed by all three planes but owned by neither.

The rationale:

- a "layer" implies encapsulation (each layer wraps the one below, as in OSI). SCP's responsibilities are a sequential pipeline, not nested abstractions

- Data and Governance are genuinely cross-cutting: accessed by multiple pipeline stages, with independent lifecycle and sovereignty concerns

Admission Plane

The Admission Plane is the protocol's public-facing entry point. It receives external requests, validates them, and produces a fully-formed TaskEnvelope that all downstream processing can rely on without re-validation.

Subsystems:

- Identity and Authorization: validate caller identity, verify authorization context against Vault-level permissions

- Consent Verification: verify data-subject consent for personally attributable records within the declared usage scope

- Semantic Resolution: resolve canonical meaning under

semantic_version, bind to registry-compatible references, validate derivation rules and attribute compositions - Privacy Budget Reservation: reserve estimated privacy budget using the reserve-commit model, reject tasks that would exceed available budget

- TaskEnvelope Assembly: assemble the immutable

TaskEnvelopewith all validated fields

Protocol boundary:

- entry: external request from a caller with identity, intent, and authorization

- exit: a validated

TaskEnvelope, or a deterministic rejection with protocol error

Invariants:

- deterministic rejection for invalid input

- no private plaintext leakage through admission responses

- no

TaskEnvelopeproduced without: valid identity, valid authorization, valid consent (where applicable), resolved semantics, reserved privacy budget, and bound policy version - semantic resolution is deterministic: equal input and equal

semantic_versionproduce equalSemanticResolutionResult - cross-domain derivation requires explicit

cross_domain_policyvalidation before the TaskEnvelope is assembled

Execution Plane

The Execution Plane receives a validated TaskEnvelope and produces an ExecutionResultBundle with associated evidence. It manages the full complexity of multi-Vault coordination, composite iteration, and TEE-based computation.

Subsystems:

- Task Orchestration: drive the task state machine through the canonical state transition rules, manage handoffs between subsystems

- Multi-Vault Coordination: fan out to participating Vaults, track per-Vault execution slices, enforce quorum requirements

- Computation: perform authorized computation inside Vault or attested TEE boundary, produce deterministic proof-linked output

- Secure Aggregation: combine per-Vault slice results inside a trusted aggregation environment, enforce cardinality thresholds at the aggregate level

- Composite Iteration Control: manage sub-task sequencing for iterative tasks, track convergence, control round progression

Protocol boundary:

- entry: a validated

TaskEnvelopefrom the Admission Plane - exit: an

ExecutionResultBundlewith proof references, attestation reports, and privacy budget consumption records

Invariants:

- deterministic execution: equal inputs and equal replay tuple produce equal output commitment

- no unauthorized cross-Vault data access

- no plaintext exposure to the coordination subsystem — coordination sees only commitments and metadata

- TEE execution must produce valid

AttestationReportwhere policy requires it - privacy budget consumption must not exceed reservation beyond policy-defined tolerance

- per-Vault slice results must meet local cardinality thresholds before leaving the Vault boundary

- aggregation must not be performed by the task submitter

- composite iteration must respect the iteration policy declared at admission

Settlement Plane

The Settlement Plane receives execution results and produces finalized protocol outcomes. It validates results, manages the challenge lifecycle, and anchors irreversible accounting.

Subsystems:

- Result Verification: validate execution output against task-class-specific acceptance criteria

- Challenge Lifecycle: manage challenge opening, evidence submission, adjudication, and resolution

- Reward Accounting: calculate per-actor reward shares based on finalized settlement context, policy version, and actor staking status (staked vs unstaked Data Producers receive differentiated reward rates as defined by protocol economics)

- Penalty Enforcement: apply penalties for protocol violations detected during verification or challenge

- Payout Execution: trigger irreversible payout based on finalized reward accounting

Protocol boundary:

- entry:

ExecutionResultBundlewith evidence chain from the Execution Plane - exit:

SettlementRootwith finalized accounting, reward distribution, and penalty records

Invariants:

- no settlement finalization without accepted verification

- challenge evidence must be replayable

- verification must not rewrite execution history

- deterministic root composition: equal inputs produce equal

SettlementRoot - append-only finalized history — no retroactive modification

- candidate context may support challenge opening; finalized context is required for irreversible accounting

Data Sovereignty Service

The Data Sovereignty Service is a cross-cutting service accessed by all three planes. It is not a pipeline stage — it is a persistent service that manages the lifecycle of private data independently of any single task.

Responsibilities:

- Record Storage and Commitment: preserve canonical private records, maintain

Commitment = SHA256(Serialize(CR))linkage, manage encrypted storage - Consent Management: maintain consent records per data subject, validate consent references against usage scope declarations

- Privacy Budget Ledger: maintain

PrivacyBudgetLedgerper data subject per usage scope, process reservation and commitment operations - Vault Access Control: enforce Vault-level authorization policies, provide authorized record material only after consent and authorization verification

Access pattern:

- Admission Plane: reads consent records and privacy budget availability to validate admission

- Execution Plane: reads authorized record material during computation, commits privacy budget consumption

- Settlement Plane: reads privacy budget records for verification, may trigger budget-related penalties

Invariants:

- no plaintext escape outside authorized Vault or TEE boundaries

- no record access without valid consent for the declared usage scope

- auditable access linkage between task, authorization, consent, and record usage

- privacy budget operations follow the reserve-commit model

- the service is authoritative for all data sovereignty decisions — no plane may override its access control

Governance Service

The Governance Service is a cross-cutting service that manages protocol configuration, actor lifecycle, and collective decision-making. Like the Data Sovereignty Service, it is accessed by all planes but owned by none.

Responsibilities:

- Policy Version Management: maintain policy version lifecycle (

draft→active→deprecated→retired), enforce epoch-boundary transitions, ensure no retroactive application - Epoch Management: manage epoch lifecycle (

open→closing→closed), assign tasks and sub-tasks to epochs, manage cross-epoch composite task accounting - Actor Registry and Staking: maintain actor identities, role bindings, three-tier staking requirements (

S_masterfor master nodes,S_enterprisefor enterprise nodes,S_producerfor optionally staking Data Producers), and slashing conditions - Governance Proposals: manage governance proposals for policy changes, attribute promotion, challenge adjudication, and protocol upgrades

Access pattern:

- Admission Plane: reads active policy version and epoch state for task validation

- Execution Plane: reads actor registry for executor assignment, reads epoch for settlement assignment

- Settlement Plane: reads policy version for reward/penalty calculation, reads actor staking status for slashing and differentiated reward rates

Invariants:

- at most one policy version is

activefor a given scope at any time - policy version transitions occur only at epoch boundaries

- tasks are evaluated under the policy version active at their admission time, regardless of subsequent changes

- governance decisions require the quorum and process defined in the active policy version

Part 2: Task Model

This part defines the protocol's primary work unit: the task. It covers how tasks are classified, what they contain, and how they move through the protocol.

Task Lifecycle

The canonical task lifecycle is:

acceptedresolvingdispatchedverifyingawaiting_settlementcompletedchallengedrejectedtimed_out

These states define protocol meaning, not a particular process layout.

State Transition Rules

The following transitions are the only legal protocol transitions. Any transition not listed here is a protocol violation.

Normal forward path:

accepted→resolving: task enters semantic resolutionresolving→dispatched: semantic resolution succeeds, task is assigned for executiondispatched→verifying: execution completes, result enters verificationverifying→awaiting_settlement: verification accepts the resultawaiting_settlement→completed: settlement finalizes

Rejection and failure paths:

accepted→rejected: admission-time validation failure discovered after initial acceptanceresolving→rejected: semantic resolution fails (unresolved domain, incompatible attributes)dispatched→rejected: execution produces invalid or policy-violating outputverifying→rejected: verification rejects the resultaccepted→timed_out: task exceeds admission-to-dispatch timeoutdispatched→timed_out: execution exceeds dispatch-to-result timeoutverifying→timed_out: verification exceeds configured timeout

Challenge path:

verifying→challenged: verifier or governance actor opens a challenge against the resultawaiting_settlement→challenged: challenge opened against a result that has not yet finalizedchallenged→awaiting_settlement: challenge resolved asnot_confirmedorclosed_without_penalty, task returns to settlement pathchallenged→rejected: challenge resolved asapprovedwith penalty, task is rejected

Terminal states:

completed,rejected, andtimed_outare terminal. No further transitions are permitted from these states.

Transition Invariants

The protocol requires:

- every transition to be recorded as an auditable protocol event with timestamp and actor reference

- no transition to skip intermediate states (e.g.,

acceptedcannot jump directly toverifying) - the replay of a task's transition history to reconstruct the same sequence under the same inputs

challengedto be the only state that can interrupt the forward path and create a loop

Task Classification

The protocol recognizes distinct task classes because different classes impose different protocol constraints on execution, verification, privacy, and resource accounting.

Canonical Task Classes

parse: single-record interpretation within one Vault boundary, such as receipt OCR or document extractionquery: governed retrieval, filtering, or audience selection that may span multiple Vaults through privacy-preserving projectionscompute: stateless or bounded computation against authorized record material, such as eligibility evaluation or feature derivationtrain: iterative model-training work that may require multiple execution rounds and cross-Vault feature aggregation

Classification Rules

The protocol requires:

- every admitted task to carry an explicit

task_class task_classto be fixed at admission and immutable for the lifetime of the task- verification strategy, privacy constraints, and reward accounting to be parameterized by

task_class - policy to define which

task_classvalues require TEE execution, multi-Vault coordination, or privacy budget consumption

Class-Specific Protocol Constraints

For query class tasks:

- execution must operate on query-safe projections or authorized Vault-side evaluation rather than unrestricted plaintext export

- result cardinality constraints such as audience caps must be enforced as protocol invariants, not advisory limits

- privacy budget consumption must be recorded per query execution

For compute class tasks:

- computation must be bounded: the task must declare maximum resource consumption (time, memory, or work units) at admission

- execution must be deterministic: equal inputs and equal replay tuple must produce equal output

- unlike

query, the output is a derived value or artifact rather than a filtered record set - unlike

train, the execution is single-pass and non-iterative

For train class tasks:

- the task may be realized as a composite task with multiple iterative rounds

- intermediate artifacts such as gradients or partial model updates are protocol-visible only as commitment references, not as plaintext

- convergence or quality thresholds defined at admission become verification acceptance criteria

TaskEnvelope

TaskEnvelope is the canonical protocol object produced by the Admission Plane upon successful task admission. It is the primary input to all downstream protocol processing.

TaskEnvelope Fields

A TaskEnvelope must preserve at least:

task_id: unique protocol-assigned task identitytask_class: one ofparse,query,compute,traincaller_context: identity and role of the task submitterauthorization_context: Vault-level authorization referencesconsent_references: list of consent references for personally attributable records, where applicablesemantic_version: the semantic version governing this taskpolicy_version: the policy version governing this taskepoch_id: the epoch to which this task is assigned for settlement purposesattribute_composition: the declaredAttributeCompositionfor multi-attribute tasks, or null for single-attribute tasksderivation_rule_refs: list ofDerivationRulereferences used by the taskbudget_lock_ref: reference to the locked monetary budget for this task, or null forparsetasks (which are free for the Data Producer under the protocol economics model)privacy_budget_reservation: reserved privacy budget for this taskcoordination_envelope: for multi-Vault tasks, the coordination envelope; null for single-Vault tasksiteration_policy: for composite tasks, the iteration policy; null for atomic tasksadmission_timestamp: protocol-assigned admission timetask_timeout: maximum allowed time from admission to completion

TaskEnvelope Invariants

The protocol requires:

- every field in the TaskEnvelope to be immutable after admission

- the TaskEnvelope to be the authoritative source for all downstream protocol decisions about this task

- the TaskEnvelope to be included in the task's replay tuple

- no downstream plane or service to override or extend the TaskEnvelope's declared constraints

Part 3: Semantic Model

This part defines how meaning is established within the protocol. It covers the namespace hierarchy (domains), the attribute system (canonical, query, and local attributes), and how attributes evolve over time.

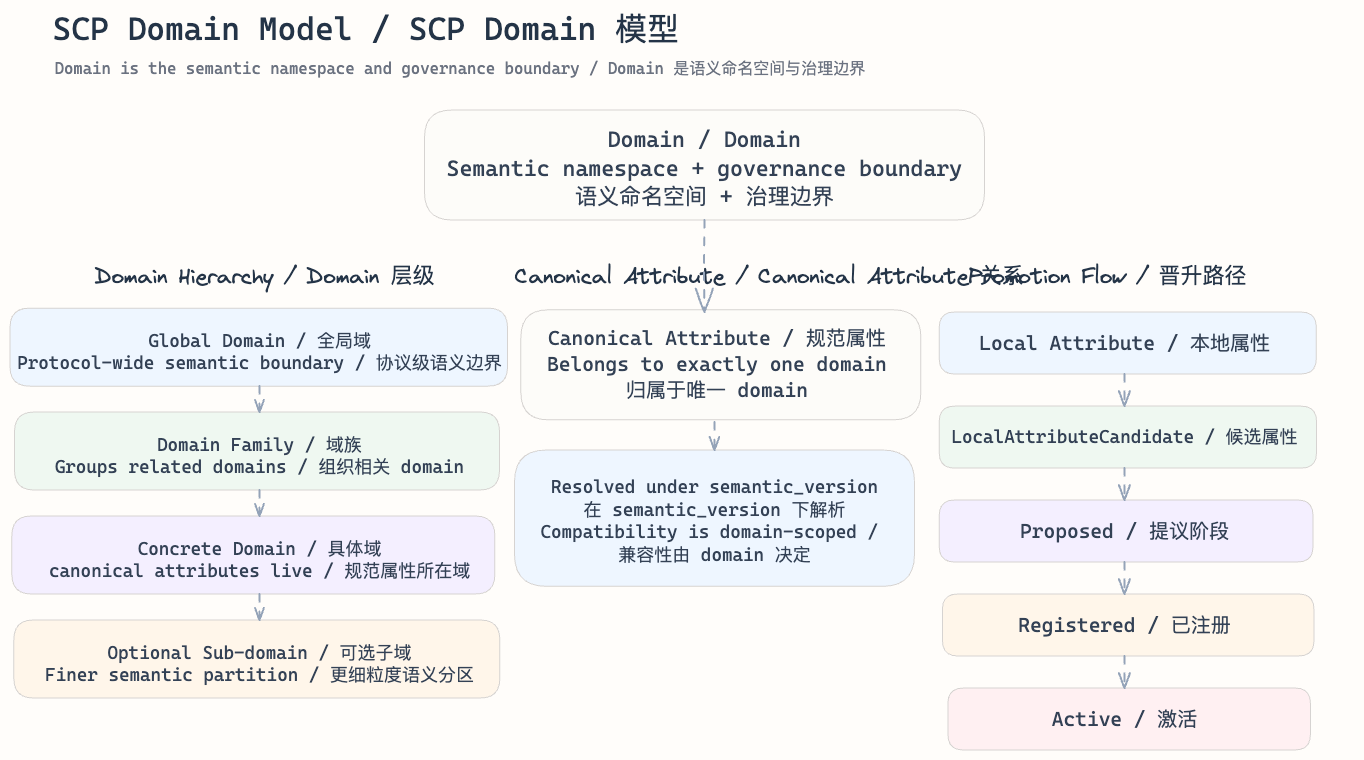

Domain Model

Domain is the semantic namespace and governance boundary within which canonical meaning is defined.

It exists so that:

- semantic interpretation has a stable namespace

- canonical attributes have an explicit ownership boundary

- compatibility and deprecation are governed within a declared semantic scope

Domain Layering

The protocol recognizes the following semantic layering:

global domain: shared protocol-wide semantic governance boundarydomain family: a higher-level namespace grouping related domainsconcrete domain: the direct namespace in which canonical attributes are registered and resolved- optional

sub-domain: a narrower namespace where policy explicitly allows finer partitioning

Implementations may realize these layers differently, but protocol semantics must preserve the same hierarchy.

Domain Responsibilities

A domain is responsible for:

- defining the namespace in which canonical attributes live

- constraining compatibility and evolution under

semantic_version - determining whether cross-domain references are allowed, rejected, or mediated

- providing the semantic boundary against which resolution and governance decisions are evaluated

Core Domain Fields

A protocol-visible domain definition should preserve at least:

domain_idparent_domain_idwhere applicabledomain_versionsemantic_versionstatus

Domain Status

The canonical domain status model is:

proposedregisteredactivedeprecatedretired

Only active domains, and deprecated domains where compatibility policy allows, may participate in new semantic resolution.

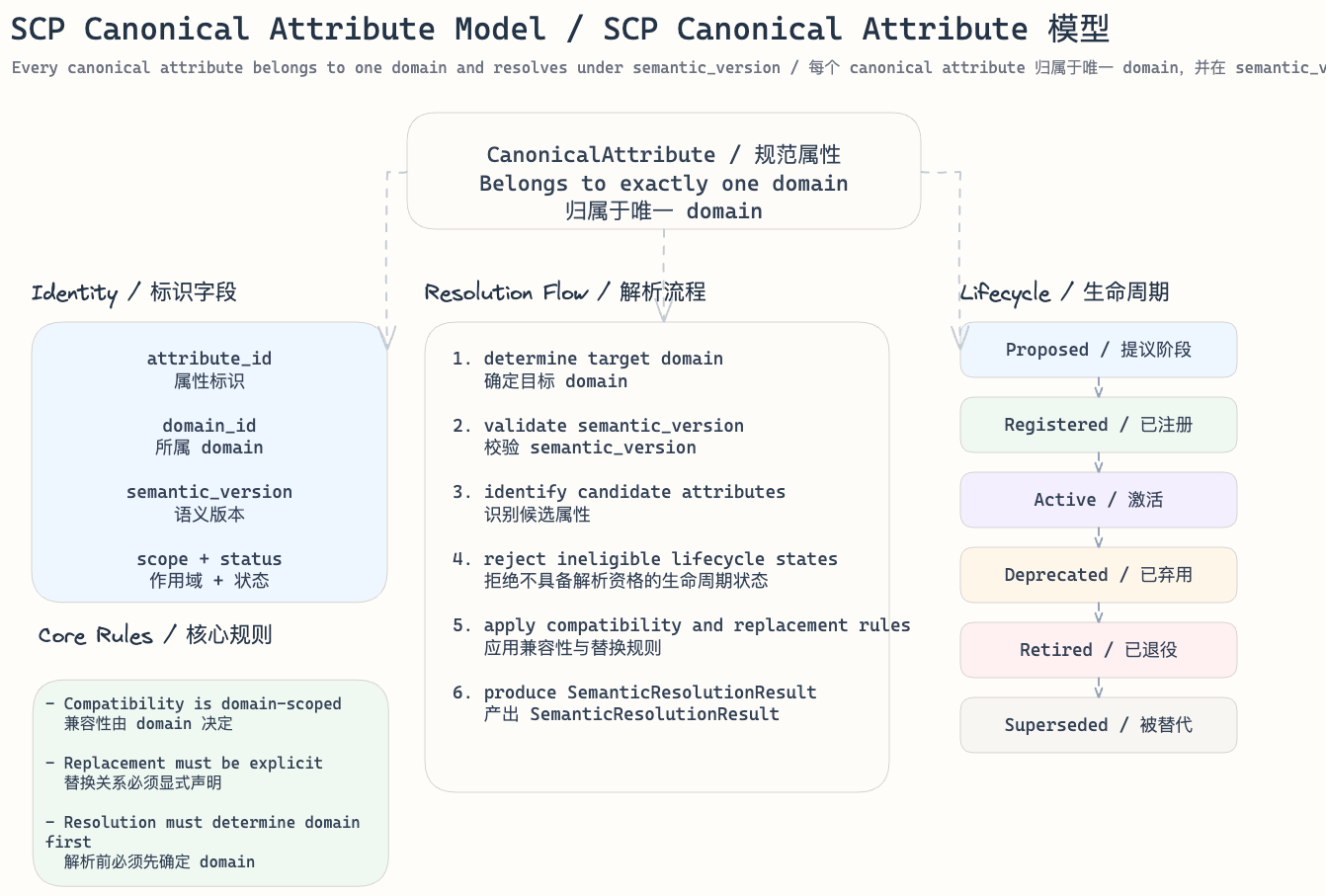

Canonical Attribute Model

CanonicalAttribute is a protocol object representing a standardized semantic attribute within a declared domain. It defines protocol-recognized semantic truth.

Every canonical attribute belongs to exactly one domain. It is not free-floating.

Canonical Attribute Identity

Protocol identity should preserve at least:

attribute_iddomain_idsemantic_version

Where lifecycle or compatibility matters, the object should also preserve:

scopestatuseffective_fromdeprecated_fromwhere applicableretired_fromwhere applicablesupersedesor replacement reference where applicable

Where direct query use is relevant, the object should also preserve:

directly_queryable: whether this canonical attribute may be used in query projections without creating a separateQueryAttributedefault_query_granularity: the defaultQueryGranularityapplied when the attribute is queried directlydefault_privacy_class: the default privacy classification applied when the attribute is queried directly

Directly Queryable Canonical Attributes

When directly_queryable is true, the protocol allows this canonical attribute to participate in governed query workflows without requiring a separate QueryAttribute object. This avoids redundant 1:1 mirroring for attributes where the canonical value is already query-safe at a governed granularity.

The protocol requires:

directly_queryableto be set explicitly during attribute registration, not inferred- direct query use to apply the

default_query_granularityanddefault_privacy_classdeclared on the canonical attribute - tasks requiring non-default granularity, custom derivation, or cross-domain composition to use a separate

QueryAttributewith an explicitDerivationRule - privacy budget consumption to apply equally whether a query uses a directly queryable canonical attribute or a separate query attribute

Canonical Attribute Rules

The protocol requires:

- every canonical attribute to belong to exactly one declared domain at a given semantic point

- attribute compatibility to be interpreted relative to domain and

semantic_version - replacement to be explicit rather than implicit when an attribute is superseded

- semantic resolution to determine domain before selecting canonical attribute meaning

directly_queryablestatus to not change the canonical truth of the attribute, only its availability for query projection

Canonical Attribute Lifecycle

The canonical lifecycle is:

proposedregisteredactivedeprecatedretiredsuperseded

Lifecycle meaning:

proposed: recognized by governance workflow but not protocol-usableregistered: recorded in the namespace but not yet active for the target semantic windowactive: eligible for semantic resolution and new task usedeprecated: still compatibility-visible but not preferred for new semantic authoringretired: not eligible for new task resolutionsuperseded: replaced by an explicit successor attribute under governed compatibility rules

Lifecycle rules:

- only

active, and policy-permitteddeprecated, attributes may resolve for new task admission retiredattributes must not be selected for new task resolution- lifecycle transitions to be bound to declared semantic governance and

semantic_version supersededattributes to point explicitly to the replacement path where one exists- equal task input and equal

semantic_versionto produce equal attribute resolution outcomes

Canonical Attribute Resolution Flow

For protocol purposes, canonical attribute resolution follows this logical flow:

- determine the target domain from task scope, semantic references, and policy context

- validate that the domain is eligible under the requested

semantic_version - identify candidate canonical attributes within that domain

- reject candidates whose lifecycle state is not resolution-eligible

- apply compatibility and replacement rules

- produce deterministic semantic references in

SemanticResolutionResult

Resolution consequences:

- unresolved domain or attribute state blocks progression into execution

- ambiguous domain or attribute mapping is rejected rather than guessed

- domain retirement or attribute retirement affects only the semantic windows governed by the relevant versioning rules

- replay of the same task under the same semantic inputs reconstructs the same domain and canonical attribute selection

Query Attribute Model

QueryAttribute is a governed semantic object representing a query-safe projection derived from protected records for audience selection, eligibility filtering, retrieval, or related governed query work.

It is not a replacement for CanonicalAttribute, and it does not redefine protocol truth.

Query Attribute Identity

Protocol identity should preserve at least:

query_attribute_iddomain_idsemantic_version

Where governance, privacy, or compatibility matters, the object should also preserve:

statusderivation_rule_id: reference to theDerivationRulethat produces this query attributequery_granularity: a structuredQueryGranularityobject defining the projection resolutionprivacy_classallowed_usage_scope

Query Granularity Model

QueryGranularity is a structured protocol object that defines the resolution at which a query attribute exposes information. Granularity directly affects privacy: coarser granularity discloses less and consumes less privacy budget.

A QueryGranularity should preserve at least:

temporal_resolution: the time precision of the projection, one ofexact,day,week,month,quarter,yearspatial_resolution: the geographic precision, one ofexact,district,city,region,countrynumeric_resolution: for numeric values, the bucketing specification (bucket boundaries and width) ornullfor non-numeric attributescategorical_resolution: for categorical values, one ofexact,group,binary

The protocol requires:

- query granularity to be fixed at query attribute registration and immutable unless the attribute is superseded

- finer granularity to require higher

privacy_classand greater privacy budget consumption - granularity to be a factor in deterministic replay: equal granularity and equal input must produce equal projection output

Derivation Rule Model

DerivationRule is a protocol object that formally defines how a QueryAttribute is derived from one or more source attributes.

Derivation rules exist because the relationship between canonical truth and query projection must be explicit, auditable, and deterministic to support replay and privacy guarantees.

Derivation Rule Identity

A derivation rule should preserve at least:

rule_idsource_attributes: a list of source references, each specifyingdomain_idandattribute_idtransform_type: the category of transformation applied, one ofpassthrough,bucket,hash,boolean,aggregate,threshold,compositetransform_params: parameters specific to the transform type (bucket boundaries, hash method, threshold value, aggregation function, etc.)output_type: the value type of the derived query attributesemantic_versionprivacy_impact_epsilon: the estimated per-execution privacy cost of this derivation

Derivation Rule Types

The protocol recognizes the following transform types:

passthrough: the source canonical value is projected without transformation, subject only to granularity coarseningbucket: the source value is mapped to a discrete range (e.g., amount ¥42.50 becomes range ¥20-50)hash: the source value is replaced by a cryptographic hash for linkage without disclosureboolean: the source value is reduced to a true/false signal (e.g., "has purchased" rather than purchase details)aggregate: multiple source records are combined into a summary statistic (e.g., count, sum, average)threshold: the source value is compared against a boundary to produce a binary or categorical output (e.g., "purchased within last 30 days")composite: multiple source attributes are combined through a defined function

Cross-Domain Derivation

When a derivation rule references source attributes from multiple domains, additional constraints apply:

- every referenced domain must be

activeor policy-permitteddeprecated - each cross-domain reference must carry an explicit

cross_domain_policyofallowed,mediated, ordenied mediatedcross-domain references require a declaredmediation_authority(a governance actor authorized to approve the cross-domain binding)- cross-domain derivation rules must be registered in the governance workflow before they can be used for query attribute creation

Derivation Rule Requirements

The protocol requires:

- every

QueryAttributethat is not derived from adirectly_queryablecanonical attribute to reference an explicitDerivationRule - derivation rules to be deterministic: equal source values, equal transform parameters, and equal granularity must produce equal output

- derivation rules to be versioned under

semantic_versionand immutable once registered - derivation rule changes to require a new rule registration and a new query attribute, not in-place mutation

privacy_impact_epsilonto be used as the basis for privacy budget consumption when the derived query attribute is used in a task

Query Attribute Rules

The protocol requires:

- every query attribute to belong to a declared domain or governed query namespace

- query projections to be derived under an explicit

DerivationRulerather than inferred silently - query attributes to remain distinguishable from canonical semantic truth

- query projection compatibility to remain version-aware under

semantic_version - reconstructable raw private values not to be emitted merely because a query projection exists

- the

DerivationRuleto be auditable as part of any task evidence chain that uses the query attribute

Query Attribute Lifecycle

The canonical lifecycle is:

proposedregisteredactiverestricteddeprecatedretired

Lifecycle meaning:

proposed: recognized for governance review but not query-usableregistered: defined in the namespace but not yet active for the target query windowactive: eligible for governed query projection and retrieval workflowsrestricted: query-usable only under narrower policy or actor scopedeprecated: still compatibility-visible but not preferred for new query designretired: no longer eligible for new projection or query use

Query Projection Constraints

The protocol requires:

- projection to occur downstream from Vault privacy boundaries

- query-safe projections to preserve auditable linkage back to source commitments or protected records

- query indexes to carry only the minimum governed signal needed for retrieval

- audience-selection or campaign-eligibility work not to rely on silently changing query semantics

- replay under the same semantic and policy inputs to reconstruct the same query interpretation

Attribute Composition Semantics

Real-world queries and computations almost always combine multiple attributes. SCP defines attribute composition as a protocol-level concern because composition directly affects privacy risk.

Why Composition Matters

Individual attribute queries may each be safe, but their combination can narrow result sets enough to re-identify individuals. For example, querying "Singapore users" and "Luckin customers" independently may each return large sets, but their intersection may be small enough to compromise anonymity.

Composition Identity

An attribute composition should preserve at least:

composition_idoperator: the logical operation, one ofAND,OR,NOT,FILTER,JOINoperand_attributes: list ofquery_attribute_idordirectly_queryablecanonical attribute references that participate in the compositioncombined_privacy_class: the privacy classification of the composed result, which must be at least as restrictive as the highestprivacy_classamong the operandsminimum_result_cardinality: the minimum number of records in the composed result below which the result must be suppressed or generalized

Composition Rules

The protocol requires:

- every multi-attribute query or computation task to declare its attribute composition at admission

combined_privacy_classto be computed as the maximum of the operand privacy classes, or higher if policy requires- privacy budget consumption for a composed query to reflect the composition, not merely the sum of individual attribute costs

minimum_result_cardinalityto be enforced at two levels:- per-Vault level: each Vault's slice result must meet local cardinality thresholds before leaving the Vault boundary

- aggregation level: the aggregate result must meet global cardinality thresholds, enforced inside the aggregation TEE before the result is released

- results below the threshold at either level to be suppressed or generalized before leaving the enforcement boundary

- attribute composition to be recorded as part of the task replay tuple so that audit can reconstruct the same composition logic

Composition and Privacy Budget Interaction

The protocol requires:

- composed queries to consume privacy budget once for the composition, not separately for each operand attribute

- the privacy cost of a composition to be at least as high as the most expensive individual operand, and may be higher based on the operator and the number of operands

- repeated identical compositions against the same data subjects to consume additional budget with each execution

Semantic Attribute Layers

Semantic objects appear in three related layers:

CanonicalAttribute: defines protocol-recognized semantic truth (what a record means)QueryAttribute: defines governed query projections (which signals may be projected for retrieval)LocalAttribute: remains inside a Vault or local semantic boundary until promotion (emerging semantics not yet canonical)

The normal ordering is:

- local or raw semantic material first appears inside a Vault

- canonical resolution determines stable protocol meaning

- query projections are derived from canonical or policy-permitted local material for governed retrieval

A field may be useful for query but still remains canonical-first in semantic meaning.

Local Attribute Pool

LocalAttribute is a local semantic artifact that has not yet been admitted as a protocol-recognized canonical attribute.

Local Pool Purpose

The local attribute pool exists so that implementations can:

- accumulate unresolved or locally meaningful attributes

- measure frequency, stability, and mapping confidence over time

- produce privacy-preserving candidate signals for later promotion

Local Pool Rules

The protocol requires:

- local attributes to remain local until promotion preconditions are met

- raw private values or reconstructable sensitive semantic material not to be emitted directly for cross-Vault aggregation

- local pool records to preserve enough metadata to support later audit and promotion review

Minimum Local Signals

A local pool entry should preserve at least:

- local attribute identifier

- candidate domain hint

- local occurrence frequency

- local stability indicators across epochs

- mapping confidence against existing canonical attributes where applicable

- privacy-safe feature summary or evidence summary

Cross-Vault Candidate Aggregation

The protocol allows local pools to contribute to a cross-Vault candidate pool, but only through privacy-preserving candidate summaries.

The default promotion aggregation window is every 10 epochs for regular candidate formation. Policy may allow every 5 epochs for high-activity domains, or exceptional governance-triggered proposal outside the normal window.

Cross-Vault aggregation must produce:

LocalAttributeCandidatesummaries- candidate domain binding

- coverage, frequency, and stability indicators

- compatibility, conflict, or alias signals relative to existing canonical attributes

It must not directly produce an active canonical attribute.

The protocol requires:

- cross-Vault aggregation to exchange candidate summaries rather than raw private local attributes

- candidate formation to be auditable at the evidence-summary level

- aggregation to remain downstream from Vault privacy boundaries

Promotion Paths

The protocol recognizes two distinct promotion paths for local attributes, reflecting that an attribute may become useful for query before it is stable enough to be canonical truth.

Promotion to Vault-Scoped Query Attribute:

A local attribute may be promoted to a vault_scoped_query attribute when it is useful for Vault-internal queries but has not yet achieved the cross-Vault stability required for canonical status.

The vault-scoped query promotion path is:

local attributevault_scoped_query(a restrictedQueryAttributewhoseallowed_usage_scopeis limited to the source Vault)

Promotion preconditions for vault-scoped query:

- stability within the source Vault across at least the configured minimum epoch window

- a declared derivation rule that produces a query-safe projection

- a declared privacy class and granularity

- Vault operator approval

Vault-scoped query attributes:

- must not participate in cross-Vault query tasks

- may participate in single-Vault tasks where the source Vault is also the executing Vault

- may later be promoted to a full query attribute if the underlying local attribute achieves canonical promotion

- carry the lifecycle of a standard query attribute but with

allowed_usage_scoperestricted tovault_local

Promotion to Canonical Attribute:

The canonical promotion path is:

local attributelocal attribute poolLocalAttributeCandidateproposed canonical attributeregisteredactive

Promotion preconditions:

- sufficient cross-Vault coverage or justified governance exception

- stability across the required epoch window

- clear domain binding

- no unresolved conflict with existing canonical attributes, or an explicit alias/replacement declaration

- replayable evidence summary supporting the promotion decision

Promotion consequences:

- local attributes remain local when promotion thresholds are not met

- candidate aggregation is a proposal mechanism, not an automatic semantic truth generator

- new canonical attributes enter the normal lifecycle of

proposed -> registered -> active - conflict resolution is governed explicitly rather than inferred silently

Part 4: Data Protection

This part defines the three mechanisms that protect private data: consent and authorization (who may access), privacy budget (how much may be disclosed), and TEE (where computation happens).

Consent and Authorization

SCP distinguishes between two layers of authorization that must both be satisfied before data can participate in protocol work.

Vault-Level Authorization

Vault-level authorization governs which actors and task classes may access record material inside a Vault boundary.

The protocol requires:

- every data access to carry an explicit

authorization_contextlinking the requesting task to a Vault-recognized permission - Vault operators to enforce authorization independently of the coordination layer

- authorization grants to be scoped by

task_class,domain_id,actor_id, and optionally by time window or usage purpose - authorization state to be auditable and replayable

Data-Subject Consent

Data-subject consent governs whether the individual whose data is stored has agreed to a specific category of usage.

The protocol requires:

- every task that accesses personally attributable records to carry a

consent_referencelinking the access to a recorded consent decision - consent to be scoped by usage purpose, such as

personal_use,marketing,analytics, ortraining - consent to be revocable, with revocation taking effect for new task admission but not retroactively invalidating already-finalized settlements

- consent state to be stored inside the Vault boundary and not exported as plaintext

Consent Lifecycle

The canonical consent state model is:

granted: the data subject has actively consented to the declared usage scopewithdrawn: the data subject has revoked consent, blocking new task admission for the affected scopeexpired: the consent has reached its declared time limit

Consent and Task Interaction

The protocol requires:

- task admission to verify consent status before accepting work that touches personally attributable records

- consent withdrawal during task execution to not abort already-dispatched work, but to block future task admission for the same scope

- consent status to be recorded as part of the task's replay tuple so that audit can confirm consent was valid at admission time

Privacy Budget and Guarantees

The protocol governs cumulative privacy exposure to prevent repeated queries or computations from gradually reconstructing private records.

Privacy Budget Model

A privacy budget is a protocol-tracked resource that bounds the cumulative information disclosed about a data subject or record set through protocol-authorized work.

The protocol requires:

- every Vault to maintain a

PrivacyBudgetLedgertracking cumulative disclosure per data subject, per usage scope - privacy budget to be parameterized by

privacy_classas declared on query attributes and task admission policy - budget consumption to be recorded at execution time and committed as part of the task evidence chain

Budget Accounting Fields

A privacy budget entry should preserve at least:

subject_idorrecord_scope: the data subject or record set whose budget is being consumedusage_scope: the declared purpose, such asmarketing,analytics, ortrainingconsumed_epsilon: the privacy loss incurred by this task, expressed in differential-privacy epsilon units where applicabletask_id: the task that caused the consumptionepoch_id: the epoch in which consumption occurred

Budget Enforcement Rules

The protocol uses a reserve-commit model to prevent race conditions between concurrent tasks:

- at admission, the protocol reserves the estimated privacy budget for the task, reducing the available budget for subsequent admission checks

- tasks that would exceed the remaining budget (after accounting for existing reservations) for any affected data subject are rejected at admission

- at execution, the protocol commits the actual consumption, which may differ from the reservation within policy-defined tolerance

- if actual consumption exceeds the reservation beyond tolerance, the task is flagged for governance review

- if a task fails or is rejected before execution, the reservation is released, restoring the available budget

- if a task fails after execution has begun, the reservation is committed at the estimated level, because execution may have already disclosed information

- committed consumption is append-only and not reversible

- budget replenishment occurs only at epoch boundaries under explicit governance policy, not automatically

Privacy Guarantees by Task Class

For query tasks:

- audience-selection results must not allow the task submitter to determine whether a specific individual is in the result set, unless the individual's consent explicitly permits identifiable targeting

- result cardinality below a policy-defined minimum threshold must cause the result to be suppressed or generalized

For train tasks:

- per-round gradient or update contributions must be aggregated across a policy-defined minimum number of Vaults before release

- model artifacts must not memorize or allow extraction of individual training records, verifiable through protocol-defined evaluation criteria

Budget and Consent Interaction

The protocol requires:

- consent withdrawal to freeze the affected data subject's budget, preventing further consumption

- budget state to be visible to the Vault operator and auditable by governance

- budget exhaustion to not revoke already-finalized settlements but to block future task admission for the affected scope

TEE Trust and Attestation Requirements

SCP treats Trusted Execution Environments (TEE) as a protocol-recognized execution boundary alongside Vault boundaries. Because TEE provides hardware-enforced isolation, it requires distinct protocol-level trust rules.

TEE Role in the Protocol

TEE serves as:

- a protected execution environment where authorized computation runs on private record material

- an alternative to Vault-internal execution when computation must be performed by an actor that does not directly operate the source Vault

- an environment that can provide cryptographic attestation that a specific computation ran on specific inputs inside a verified enclave

TEE Attestation Requirements

The protocol requires:

- every TEE-executed task to carry an

AttestationReportlinking the execution to a verified enclave identity AttestationReportto preserve at least: enclave identity, enclave measurement hash, platform identity, and freshness nonce- the verification subsystem to validate attestation before accepting TEE-executed results

- attestation to be replayable as part of the task evidence chain

TEE and Vault Boundary Relationship

The protocol defines:

- a Vault may delegate execution to a TEE when the task requires computation that the Vault operator cannot or chooses not to perform locally

- record material transferred from Vault to TEE must be encrypted in transit and decrypted only inside the attested enclave

- TEE must not retain record material after task execution completes

- the protocol treats TEE as a temporary extension of the Vault privacy boundary, not as an independent data custodian

TEE Failure Handling

The protocol requires:

- TEE platform failure during execution to result in

timed_outfor the affected task or sub-task - attestation failure to result in

rejectedat the verification subsystem - TEE side-channel vulnerabilities disclosed after settlement to be addressable through the challenge lifecycle

- TEE platform revocation by the hardware vendor to block new task dispatch to affected enclaves under governance policy

When TEE Execution Is Required

The protocol does not mandate TEE for all task classes. Policy determines TEE requirements:

parsetasks on single-Vault data may execute inside the Vault without TEEquerytasks that evaluate cross-Vault eligibility should execute inside TEE when the computation touches record material from a Vault that the executor does not operatecomputeandtraintasks involving cross-Vault record material must execute inside attested TEE unless all participating Vaults explicitly permit non-TEE execution under policy

Part 5: Execution Model

This part defines how tasks execute in practice, covering the complexities of multi-Vault coordination and composite (iterative) tasks that extend the basic single-Vault, single-pass model.

Multi-Vault Task Coordination

Many platform capabilities require a single task to access records distributed across multiple independently governed Vaults. SCP defines the protocol semantics for such coordination.

When Multi-Vault Coordination Applies

Multi-Vault coordination is required when:

- a

querytask must evaluate eligibility or selection criteria against records in more than one Vault - a

computetask requires feature material from multiple Vault boundaries - a

traintask must aggregate gradients, features, or model updates derived from records in different Vaults

Single-Vault tasks, such as personal receipt parsing, do not require multi-Vault coordination.

Fan-Out and Aggregation Model

The protocol decomposes a multi-Vault task into:

- a

coordination envelopethat carries the shared admission, semantic, and policy context - per-Vault

execution slicesthat run independently inside each participating Vault boundary - a

secure aggregation stepthat combines per-Vault results without exposing individual Vault plaintext

Coordination Envelope

A coordination envelope preserves:

parent_task_idparticipating_vault_ids: the set of Vaults that must contributeminimum_vault_quorum: the minimum number of Vaults that must complete for the result to be validaggregation_method: the protocol-recognized method for combining results, such asunion,intersection,secure_sum, orfederated_averageper_vault_timeout: the maximum time each Vault has to respond before the slice is treated as timed out

Per-Vault Execution Slice

Each execution slice:

- follows the canonical task lifecycle independently within its Vault boundary

- produces a

SliceResultBundlecontaining only commitment references and privacy-safe outputs - does not expose plaintext to the coordination layer or to other Vaults

Secure Aggregation

The protocol requires:

- aggregation to operate on privacy-safe slice outputs, not on raw Vault plaintext

- the aggregation method to be fixed at task admission and auditable

- aggregation to be deterministic: equal slice inputs and equal aggregation method must produce equal aggregate output

- cardinality constraints such as audience caps to be enforced at the aggregation step where they span Vault boundaries

Aggregation Trust Model

Secure aggregation is a trust-critical step because the aggregator receives outputs from multiple Vaults. The protocol defines the following trust requirements:

Aggregation execution environment:

- aggregation must execute inside one of: (a) an attested TEE, (b) a secure multi-party computation protocol where no single party sees all inputs, or (c) a homomorphic encryption scheme where aggregation operates on ciphertexts

- TEE-based aggregation is the default and must carry an

AttestationReportin the aggregation evidence - the choice of aggregation environment must be declared in the coordination envelope and is immutable for the task

Aggregation actor:

- the aggregator is a protocol actor subject to the same staking, penalty, and governance rules as an

Executor - the aggregator must not be the same entity as the task submitter, to prevent the requester from observing per-Vault outputs

- the aggregator must not retain per-Vault slice outputs after producing the aggregate result

Aggregation evidence:

- the aggregate result must carry a cryptographic proof or attestation linking the output to the declared inputs and method

- verification must validate the aggregation evidence before accepting the aggregate result

- aggregation evidence must be replayable as part of the task evidence chain

Partial Completion

The protocol requires:

- if fewer Vaults than

minimum_vault_quorumcomplete, the parent task must berejectedortimed_out - if the quorum is met but some Vaults did not respond, the aggregation proceeds over the available slices and the result carries an explicit

partial_coverageflag - verification must evaluate whether partial coverage is acceptable under the task's admission policy

Multi-Vault Authorization

The protocol requires:

- every participating Vault to independently verify authorization and consent before executing its slice

- the coordination layer to not override per-Vault authorization decisions

- a Vault that rejects authorization to reduce the available coverage without blocking other Vaults

Composite and Iterative Task Semantics

A composite task is a protocol-recognized task that is decomposed into ordered sub-tasks under a shared admission, authorization, and settlement context. Composite tasks exist because certain platform capabilities, notably distributed training and multi-stage computation, cannot be faithfully represented as a single atomic task lifecycle.

Composite Task Structure

A composite task preserves:

parent_task_id: the root task identitysub_task_sequence: ordered list of sub-task identitiesiteration_policy: rules governing how many rounds may execute and under what convergence or budget conditionsaggregation_rule: how sub-task results are combined into the parent result

Sub-Task Lifecycle

Each sub-task follows the canonical task lifecycle independently. The parent task transitions are governed by aggregation:

- the parent remains

dispatchedwhile any sub-task is in progress - the parent moves to

verifyingonly when all required sub-tasks have reachedawaiting_settlementor the iteration policy declares convergence - if any sub-task is

rejectedortimed_out, the parent may be rejected, retried under policy, or partially settled where policy permits partial acceptance

Iterative Execution Rounds

For training and iterative computation:

- each round produces a sub-task with its own

ExecutionResultBundleandCommitmentreferences - round-to-round state is carried through commitment references, not through plaintext intermediate artifacts

- the iteration policy defines maximum rounds, convergence thresholds, and early-stop conditions

- each round independently consumes privacy budget where applicable

Multi-Vault Composite Interaction

When a composite task requires multi-Vault coordination, the two decomposition mechanisms compose into a three-level hierarchy:

- Parent task: the root composite task admitted at the protocol level

- Sub-task (round): each iterative round, following the composite task lifecycle

- Execution slice (per-Vault): each Vault's contribution within a single round, following the multi-Vault coordination model

The protocol requires:

- each sub-task to carry its own coordination envelope when it requires multi-Vault fan-out

- per-Vault slices within a sub-task to follow the canonical execution slice rules (independent lifecycle, SliceResultBundle, no plaintext exposure)

- secure aggregation to occur at the sub-task level, combining per-Vault slices into the sub-task result before the next round begins

- the parent task to track sub-task completion, not individual slice completion

- a slice failure in one round to affect only that round's sub-task, not the parent or other rounds

This means for a multi-Vault training task with N rounds and M Vaults, the protocol manages: 1 parent task + N sub-tasks + (N × M) execution slices. Each level has its own lifecycle, evidence, and settlement context.

Composite Settlement

The protocol requires:

- composite tasks to settle as a unit after all sub-tasks are verified

- partial settlement to be policy-governed and explicitly declared, not silently assumed

- reward accounting for composite tasks to reflect the aggregate verified contribution across sub-tasks

- the composite settlement context to preserve linkage to every sub-task settlement context

Part 6: Settlement and Delivery

This part defines how execution results are validated, challenged, settled, and delivered to downstream consumers.

Challenge Lifecycle

The canonical challenge lifecycle is:

openedreviewingconfirmednot_confirmedapprovedclosed_without_penaltypenalty_recordedreopened

Challenge evidence must be replayable and linked to the relevant task, verification, and settlement context.

Verification Requirements by Task Class

For parse tasks:

- verify structured output conforms to the resolved semantic schema

- verify commitment linkage between input record and output fields

For query tasks:

- verify result cardinality satisfies

minimum_result_cardinalityfrom the attribute composition - verify privacy budget consumption matches declared

privacy_impact_epsilon - verify audience cap enforcement where applicable

- for multi-Vault queries, verify aggregation evidence and TEE attestation

For compute tasks:

- verify determinism: re-execution under the same inputs must produce the same output commitment

- verify resource consumption did not exceed declared bounds

For train tasks:

- per-round verification: validate each sub-task's TEE attestation and privacy budget consumption

- composite verification: validate convergence state, total privacy budget, and model quality against admission criteria

- verify model artifact does not memorize individual records (membership inference resistance)

Challenge Scope for Composite and Multi-Vault Tasks

The protocol extends the challenge lifecycle for complex tasks:

- a challenge may target a specific per-Vault execution slice, identified by

parent_task_id+vault_id - a challenge may target a specific sub-task round, identified by

parent_task_id+sub_task_sequence_index - a challenge may target the aggregation step, identified by

parent_task_id+aggregation - a challenge may target the composite result as a whole

Challenge consequences for composite tasks:

- if a single slice is challenged and the challenge is approved, the sub-task containing that slice may be re-executed or rejected under policy

- if a sub-task round is challenged and approved, subsequent rounds that depended on it are invalidated

- if the aggregation is challenged and approved, the entire task result is rejected

- challenge against a sub-task does not automatically invalidate the parent unless the sub-task is essential under the iteration policy

Settlement Lifecycle

The canonical settlement lifecycle is:

collectingverifying_inputscandidate_readyfinalizingfinalizedfailed

Settlement Candidate Context

Candidate context is used for:

- challenge opening

- settlement readiness

- fraud evidence linkage

Finalized Settlement Context

Finalized context is used for:

- reward finalization

- protocol audit

- payout preparation

- irreversible accounting references

Result Delivery

SCP defines the protocol boundary for how accepted and settled results become available to downstream consumers.

Delivery Scope

Result delivery governs:

- who may access the finalized result

- what form the delivered result takes

- how delivery is confirmed or acknowledged

Delivery Actors

The protocol recognizes:

task_submitter: always has delivery access to the finalized result of their own taskauthorized_consumer: an actor who holds a delivery grant for a specific settled resultvault_operator: may deliver Vault-local results to the data subject

Delivery Form

The protocol requires:

- delivered results to be derived from finalized settlement context, not from raw execution output

- results containing personally attributable information to remain subject to consent and authorization constraints even after settlement

- the delivered form to be distinguishable from the full protocol settlement context, which may contain internal evidence and accounting references not intended for external consumers

Delivery Confirmation

The protocol requires:

- delivery events to be recorded as protocol-auditable artifacts

- delivery confirmation to not be a prerequisite for settlement finality, which is determined by the settlement lifecycle alone

- failed or refused delivery to not retroactively invalidate finalized settlements

Part 7: Protocol Configuration

This part defines the governing parameters that control protocol behavior: policy versions, epochs, and the determinism requirements that ensure replay.

Policy Version Model

policy_version is a protocol object that captures the full set of configurable protocol parameters active at a given point in time. It exists so that protocol behavior is deterministic and replayable even as operational parameters evolve.

What Policy Version Contains

A policy version governs at least:

- per-

task_classconstraints: TEE requirements, maximum execution time, maximum resource consumption - privacy parameters: default privacy budget allocation per usage scope, minimum result cardinality thresholds, budget replenishment rates

- economic parameters: minimum stake amounts per actor role, reward calculation formulas, penalty severity scales

- admission controls: rate limits, concurrency caps, budget lock requirements per task class

- multi-Vault parameters: default quorum requirements, per-Vault timeout defaults, aggregation environment requirements

- governance parameters: challenge window duration, challenge bond amounts, governance voting thresholds

Policy Version Identity

A policy version should preserve at least:

policy_version_ideffective_from_epoch: the epoch from which this policy version takes effectstatus: one ofdraft,active,deprecated,retiredpredecessor_version_id: the policy version this one replaces

Policy Version Lifecycle

The canonical lifecycle is:

draft: under governance review, not yet enforceableactive: the current enforceable policy version for the governed scopedeprecated: still valid for in-progress tasks admitted under it, but new tasks must use the successorretired: no longer valid for any purpose

At most one policy version should be active for a given governed scope at any time.

Policy Version Transition Rules

The protocol requires:

- policy version transitions to occur only at epoch boundaries

- tasks admitted under a given

policy_versionto be evaluated under that version for their entire lifecycle, even if the policy version is later deprecated - the active

policy_versionat task admission time to be recorded in the task's replay tuple - policy version changes to be announced at least one epoch in advance through governance

- no retroactive application of new policy to already-admitted tasks

Epoch Model

Epoch is a stable window identifier used to group protocol events into a replayable control context.

It is not the prerequisite for raw data upload or for the mere existence of a task.

It primarily governs:

- settlement windows

- reward calculation windows

- payout batching windows

- local attribute candidate aggregation windows

- replay partitioning where batching matters

It does not primarily govern:

- raw data upload into Vault

- whether a task can be formed

- whether semantic resolution can begin

- whether a single authorized execution can start

Core Epoch Fields

An epoch definition should preserve at least:

epoch_idopen_atclose_atstatussemantic_version_setpolicy_version_set- settlement scope metadata

- reward scope metadata

- candidate aggregation scope metadata

Epoch States

The canonical epoch state model is:

openclosingclosed

At most one epoch should normally be open for a given governed scope.

Open Condition

A new epoch opens when:

- the previous epoch has reached

closed, or - the system is creating the initial bootstrap epoch

An open epoch is the active window for assigning later settlement, reward, and aggregation scope.

Closing Triggers

An open epoch should move to closing when one or more of the following occurs:

- the configured maximum time window is reached

- task, verification, settlement, or aggregation volume reaches the configured threshold

- governance or policy requires a window boundary, such as version transition or controlled maintenance

This is a mixed trigger model:

- time may close the window

- capacity may close the window

- governance may close the window

Close Condition

An epoch moves from closing to closed when:

- the settlement scope for that epoch is frozen

- the reward scope for that epoch is frozen

- the candidate aggregation scope for that epoch is frozen

- unresolved items have either been resolved for the current epoch or explicitly deferred under policy

Once an epoch is closed:

- new events must not be newly assigned to it

- replay should reconstruct the same epoch membership

- downstream reward, payout, and candidate aggregation can proceed against a stable window boundary

Epoch and Composite Task Interaction

Composite tasks may span multiple epochs. The protocol defines:

- the parent task is assigned to the epoch in which it was admitted

- each sub-task is assigned to the epoch that is

openat the time the sub-task is dispatched - if an epoch closes while a sub-task is in progress, the in-progress sub-task retains its assigned epoch; subsequent sub-tasks are assigned to the next epoch

- composite settlement aggregates sub-task settlements across their respective epochs

- reward accounting for each sub-task follows the epoch to which that sub-task was assigned

- if an epoch closes while the parent task has no dispatched sub-tasks remaining, the parent may be deferred to the next epoch under policy or timed out

Determinism and Replay

Determinism requires:

- fixed object semantics

- fixed sort and hash rules

- stable epoch mapping

- version-aware parameter resolution

- replayable evidence and accounting artifacts

Parameter resolution must be a deterministic function of:

epoch_idsemantic_versionpolicy_version

Part 8: Contract Boundary

Conforming Implementation Requirements

Conforming implementations must expose protocol coverage for at least:

- task and semantic messages, including task classification and composite task structures

- consent and authorization messages

- execution and verification messages, including TEE attestation where applicable

- multi-Vault coordination messages, including coordination envelopes and slice results

- derivation rule registrations and attribute composition declarations

- settlement messages

- reward records

- payout instructions and events

- privacy budget consumption records

- result delivery events

The current canonical source of truth is the Markdown specification set. Machine-readable schema artifacts are implementation deliverables defined by SCS.

Canonical Error Families

Protocol-visible error families include:

SCHEMA_*VERSION_*AUTH_*CONSENT_*POLICY_*EXECUTION_*PROOF_*TEE_*VERIFY_*COORDINATION_*DERIVATION_*COMPOSITION_*PRIVACY_BUDGET_*SETTLEMENT_*REWARD_*PAYOUT_*DELIVERY_*GOVERNANCE_*INTERNAL_*